Why this matters: You cannot improve what you cannot measure. Building reliable AI applications requires robust testing and evaluation strategies beyond simple string matching.

The LLM Evaluation Dilemma

Prompt: "Why is the sky blue?"

"Due to Rayleigh scattering."

"Gases in the atmosphere scatter sunlight."

Both are correct. How would a simple script automatically score this? (Hint: It can't!)

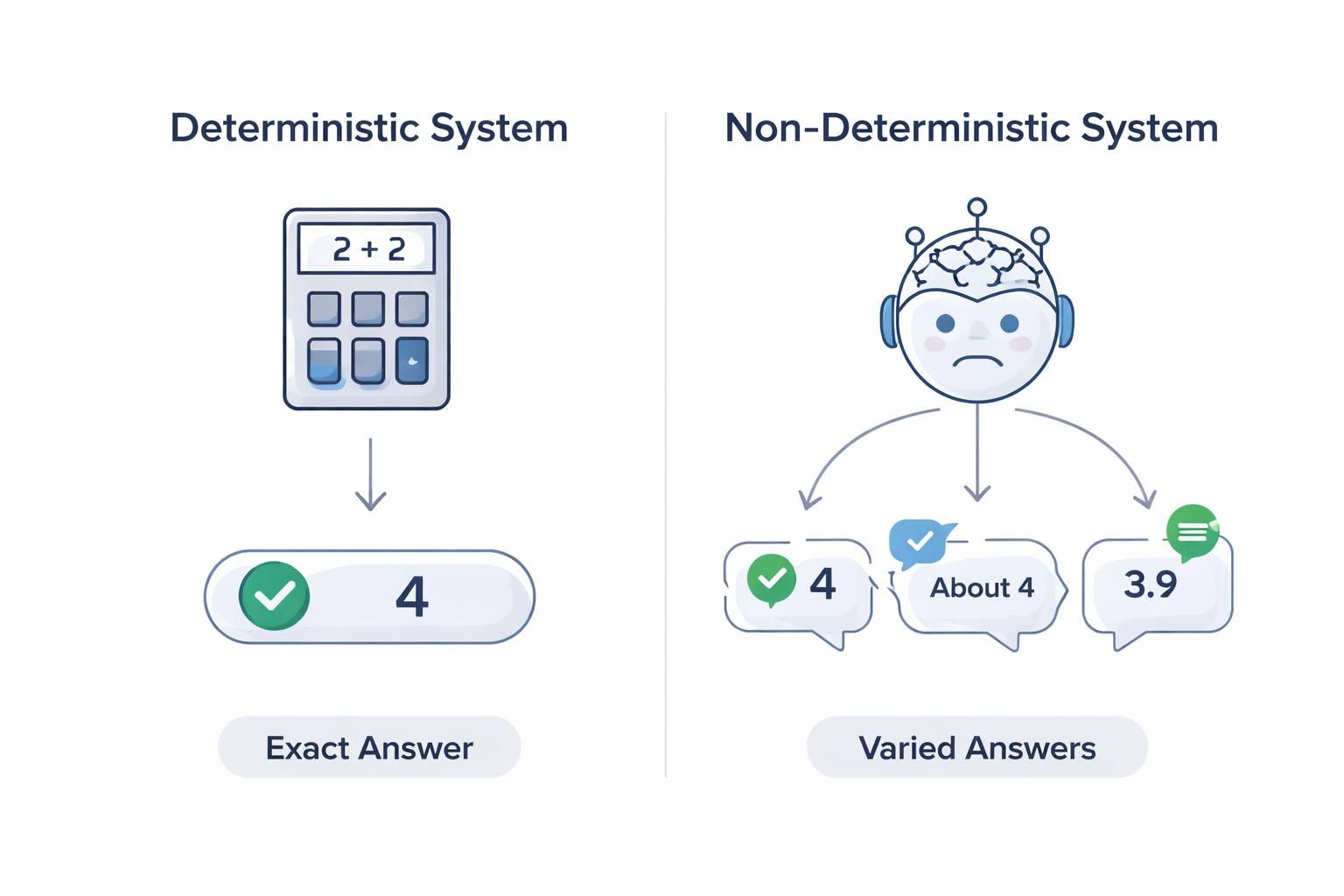

Welcome to the complex world of LLM evaluation! Assessing deterministic software is easy: the answer is either exactly correct or it isn't. But Large Language Models (LLMs) are creative, nuanced, and unpredictable.

In this lesson, we will dive deep into how to test, measure, and improve these non-deterministic AI systems to ensure they are safe, accurate, and reliable.

Interactive: Human vs. Automated Evaluation

Because language is subjective, how do we grade it? Explore the two main approaches below by clicking the tabs.

Human Evaluation (RLHF)

Having human experts rate and rank outputs is the gold standard for capturing nuance, safety, and cultural alignment. Real people can judge tone and subjective accuracy.

Pros: Captures deep nuance and human alignment.

Cons: Slow, expensive, and subject to individual bias.

Automated Evaluation (LLM-as-a-Judge)

Using another powerful AI (like GPT-4) to grade the system’s output based on a specific rubric. This method allows teams to test thousands of responses in minutes.

Pros: Incredibly fast, scalable, and reproducible.

Cons: Automated judges can miss deep context or show biases (e.g., preferring longer answers or lists).

Golden Datasets & Prompt Testing Strategies

To ensure reliability, teams must build rigorous test suites. This usually starts with a Golden Dataset—a curated list of standard queries, edge cases, and adversarial prompts acting as the ground truth.

- Regression Testing: Before deploying an update, run your Golden Dataset to ensure the new model or prompt still handles previous questions correctly.fdfdfddf

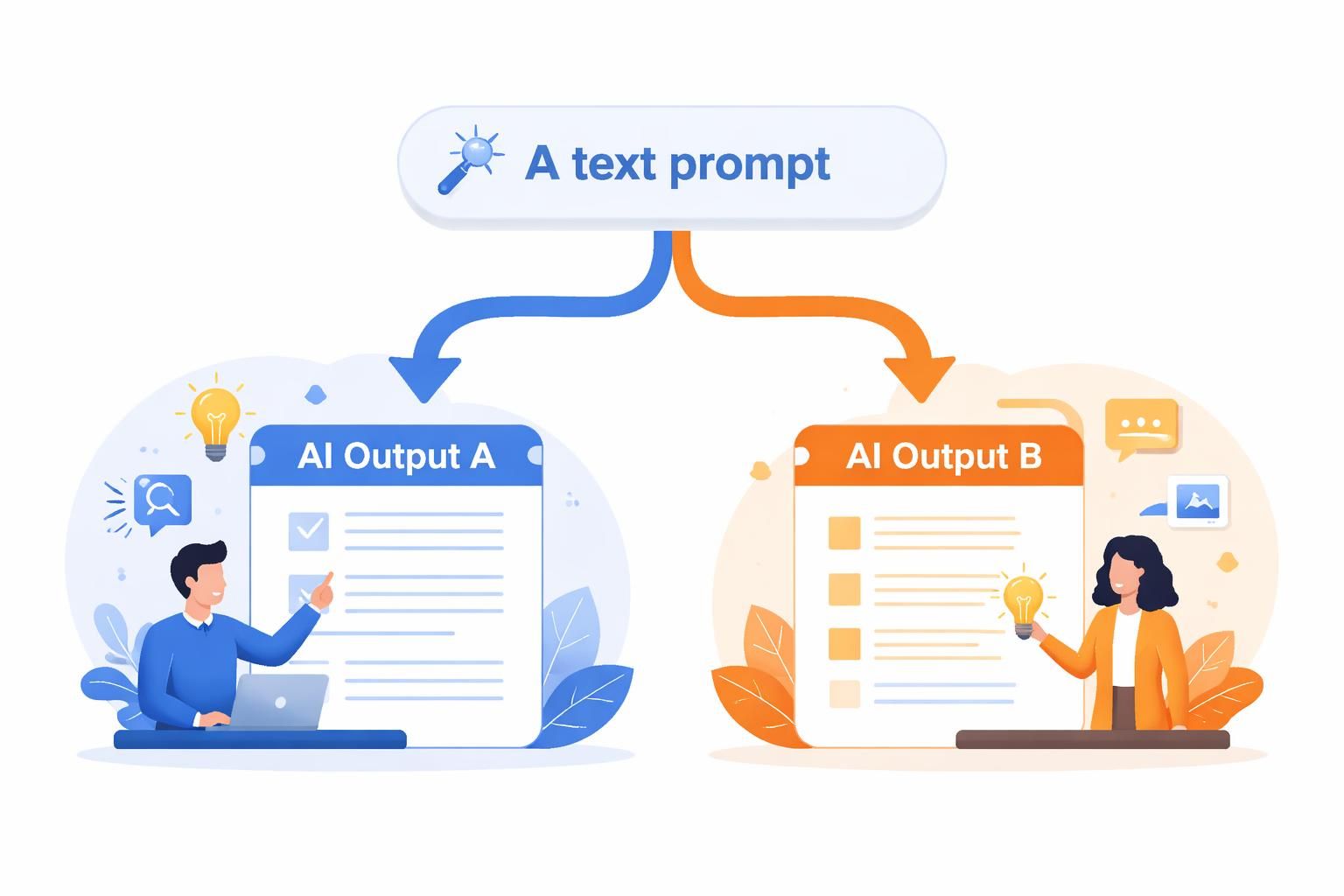

- A/B Testing: Run two different versions in production and measure which one real users prefer via implicit or explicit feedback.

Without structured testing, an innocent tweak to a prompt can silently degrade performance on other tasks.

Never change a production prompt without running it against your test suite first!

Hallucination Detection & Mitigation

A "hallucination" happens when an LLM confidently generates false or nonsensical information. It sounds highly plausible but is factually incorrect.

To detect this, evaluators often use Retrieval-Augmented Generation (RAG), tracing the output back to a known source document. If the fact isn't in the source, it's flagged as a hallucination.

Key mitigation techniques include:

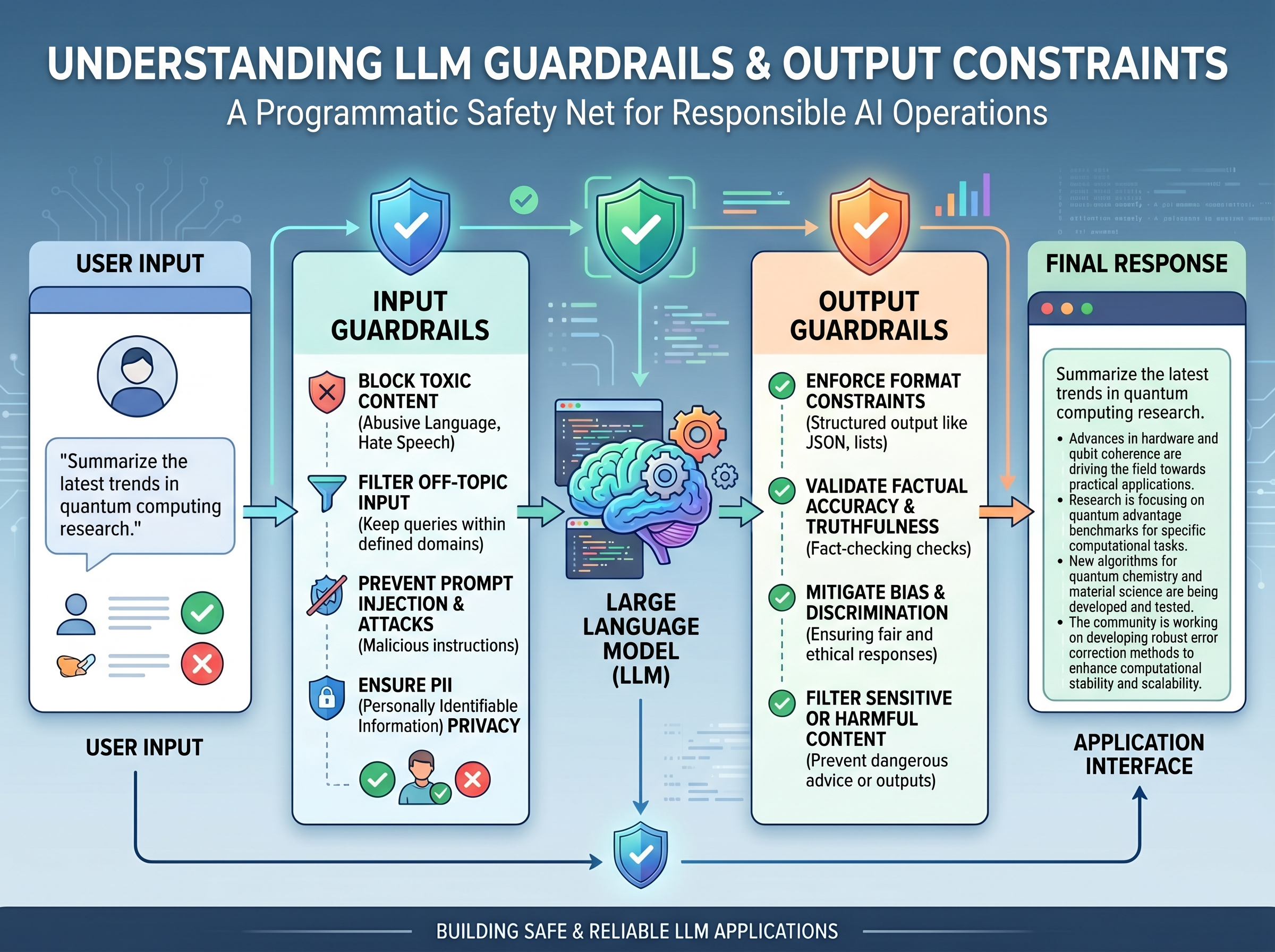

Guardrails and Output Constraints

To keep an LLM operating safely within bounds, developers use Guardrails. Think of these as a programmatic safety net between the user, the LLM, and the application.

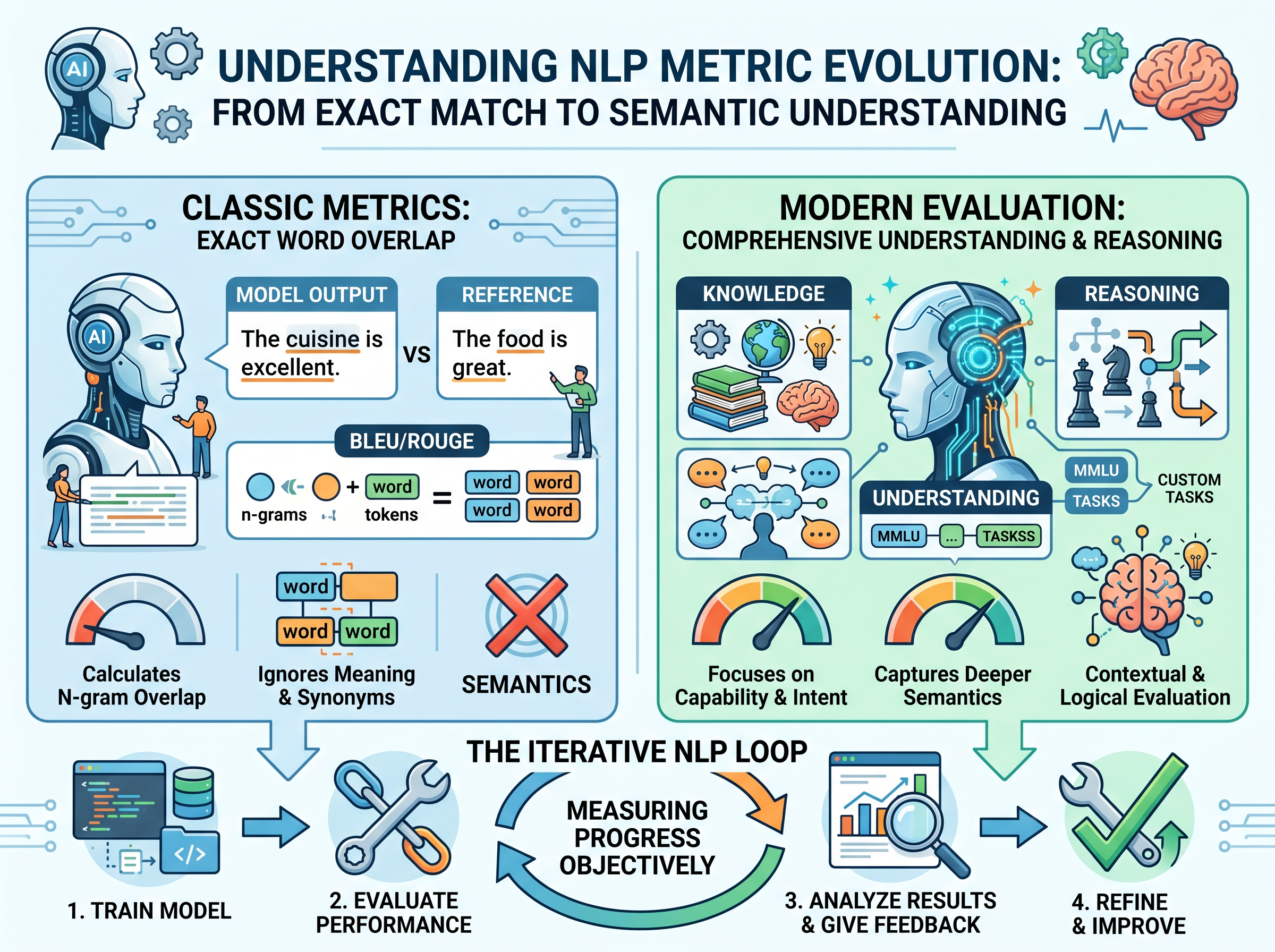

Benchmarking & The Iterative Loop

To measure progress objectively, the industry uses standardized benchmarks and metrics.

While classic NLP metrics like BLEU or ROUGE measure exact word overlap (often poorly capturing semantics), modern evaluation relies on datasets like MMLU for knowledge, or custom LLM-as-a-Judge rubrics to capture intent.

Building a reliable system requires a continuous Iterative Loop:

Assessment Question 1

Why is traditional unit testing largely ineffective for evaluating LLM outputs?

Assessment Question 2

What is the primary purpose of an "Output Guardrail" in an LLM system?

Assessment Question 3

In the context of LLM evaluation, what is a "Golden Dataset"?

Assessment Question 4

Which mitigation technique involves tracing the model's output back to a known source document to prevent hallucinations?

Assessment Question 5

Why are classic NLP metrics like BLEU or ROUGE often insufficient for modern LLM evaluation?